Sloth: A Minimal-Effort Kernel for Embedded Systems

← back to Sloth OverviewSloth on Time: Efficient Hardware-Based Scheduling for Time-Triggered RTOS

Originally, the Sloth project only focused on event-triggered real-time systems. With the development of Sloth on Time, the paradigms of time-triggered operation were introduced as well.

In maintaining the design principles of Sloth, time-triggered operation is implemented with the goal of maximizing the utilization of available hardware components while in turn minimizing the need for software efforts at run time. This is achieved by abandoning the traditional approach of employing a single hardware timer on which the timing demands of the entire application are multiplexed in software and instead exploiting the hardware timer arrays—as they are available on many modern microcontroller platforms—by statically mapping the configuration to individual cells, eliminating the need for intervention at run time.

Hardware Requirements

In addition to the platform requirements imposed by the event-triggered Sloth system, Sloth on Time depends on two properties of the underlying hardware:

- The platform must provide an array of independently operating timer cells that is sufficiently large to map all time-based requirements of the application onto individual timers.

- The timer cells in this arrays must have means of each triggering interrupts at configurable priorities in order to facilitate the activation of tasks on specific timer events.

Regarding the first requirement, there is, however, room for relaxing the goal of minimizing the software effort and trading the allocation of hardware units for additional overhead by selectively falling back to multiplexing timers.

Sloth on Time Design

When configuring the system tailored to the application, Sloth on Time maps the time-based requirements given by the configuration to the hardware components available on the target platform. This means that, for each time-triggered task activation, deadline, or execution budget assigned to task, a timer cell is allocated to perform this particular action at run-time.

When executing the application, an initialization phase programs all allocated timer cells according to the actions they have been assigned to and initiates their operation along with starting up the application. During run time, the dispatcher table is then maintained solely by the independent operation of these timer cells and does not require any software intervention apart from the regular interrupt handlers dispatching tasks whenever they are triggered.

In order to obtain a stack-based scheduling behavior—as it is prescribed by the OSEKtime standard—, while still leaving the scheduling decision to the interrupt controller, Sloth on Time divides the task priority space into three partitions:

- a trigger priority for dispatching newly activated time-triggered tasks

- below that, a single execution priority for all time-triggered tasks

- and further below, any priorities assigned to event-triggered objects configured as part of a mixed operation mode

The interrupt handler of any time-triggered task is then enhanced such that it immediately lowers the current priority to the execution priority for time-triggered tasks. This way, the activation of any time-triggered task is ensured to always preempt a previously running task, since all running tasks are kept at a priority level below the trigger priority level during their execution.

Deadline Monitoring

The approach to deadline monitoring in Sloth on Time also benefits from the individual assignment of timing actions to timer cells. Unlike traditional systems, which interrupt the current control flow when a deadline expires in order to verify that it has been met, Sloth on Time avoids such unnecessary interruptions by simply enabling the timer cell assigned to a deadline only as long the monitored task is actually running. This deals a slight increase in overhead on task termination, but eliminates the undesired scenario of preempting a high-priority task on behalf of a low-priority task for a deadline violation check.

Sloth on Time Implementation

On the reference platform for Sloth on Time, the TriCore TC1796, the behavior of timer cells is implemented by combining two adjacent local timer cells (LTC) in the general purpose timer array (GPTA) into an assembly that can be programmed to first delay a certain amount of time and then repeatedly trigger a specific interrupt at a fixed interval. With the help of global timer cells, the GPTA also offers a method for enabling and disabling a group of timer cells at once, thereby allowing precise control over the cells involved in a complete schedule table.

Performance of Sloth on Time and Advantages over Traditional Designs

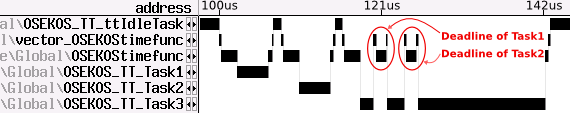

Comparing the execution traces of Sloth on Time and a commercial OSEKtime implementation reveals how our system behaves better in terms of schedulability of the application and predictability of execution times, since unnecessary deadline check interrupts are avoided:

- Commercial OSEKtime system:

- Sloth on Time:

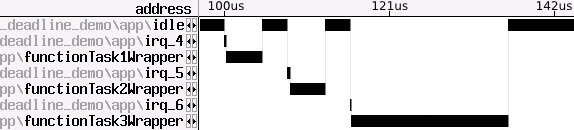

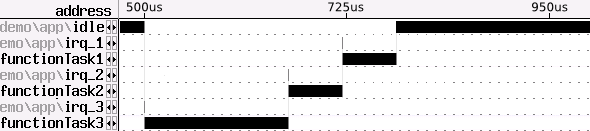

A similar issue arises with the time-based activation of low-priority tasks in an AUTOSAR application. Even if a high-priority task is currently running, traditional designs need to interrupt at these points to record the activation but not yet dispatch the low-priority task, as can be seen in the first figure below. In the commercial system we evaluated, this interruption amounts to 2,075 cycles. In contrast, Sloth on Time is not affected by this, keeping the scheduling decision off the CPU and not taking any action on behalf of the low-priority tasks before the running high-priority task has terminated.

- Commercial AUTOSAR system:

- Sloth on Time:

A quantative analysis shows that the latencies of time-triggered task activations and resuming preempted tasks are significantly lower than in commercially available kernels that adhere to traditional design principles. The speed-ups achieved by Sloth on Time range between 1.1 and 8.6 in comparison to a commercial OSEKtime implementation and between 6.0 and 171.4 in comparison to a commercial AUTOSAR system. In each case, the results depend on the specific system configuration and which task transition is measured. For a more comprehensive presentation of our results and the evaluation setup, see our RTSS 2012 publication on Sloth on Time.